A great thing about working with software is that despite it being a relatively young industry, there’s a staggering number of near-perfect abstractions we get to build upon. If you want to open a link in your app, you don’t need to know much about the intricacies of rendering engines, TCP/IP, and so on, let alone lower level frameworks, assembly code, or how a CPU works. Regardless of your platform and framework choices there’s likely something readymade that you can just drop in, wire up with a few lines, and forget about for the most part.

Every once in a while though, something happens that makes you open up a previously perfectly opaque component to figure out exactly what the hell is going on in there, and this is a post about one of those instances.

I just wanted to flip an image

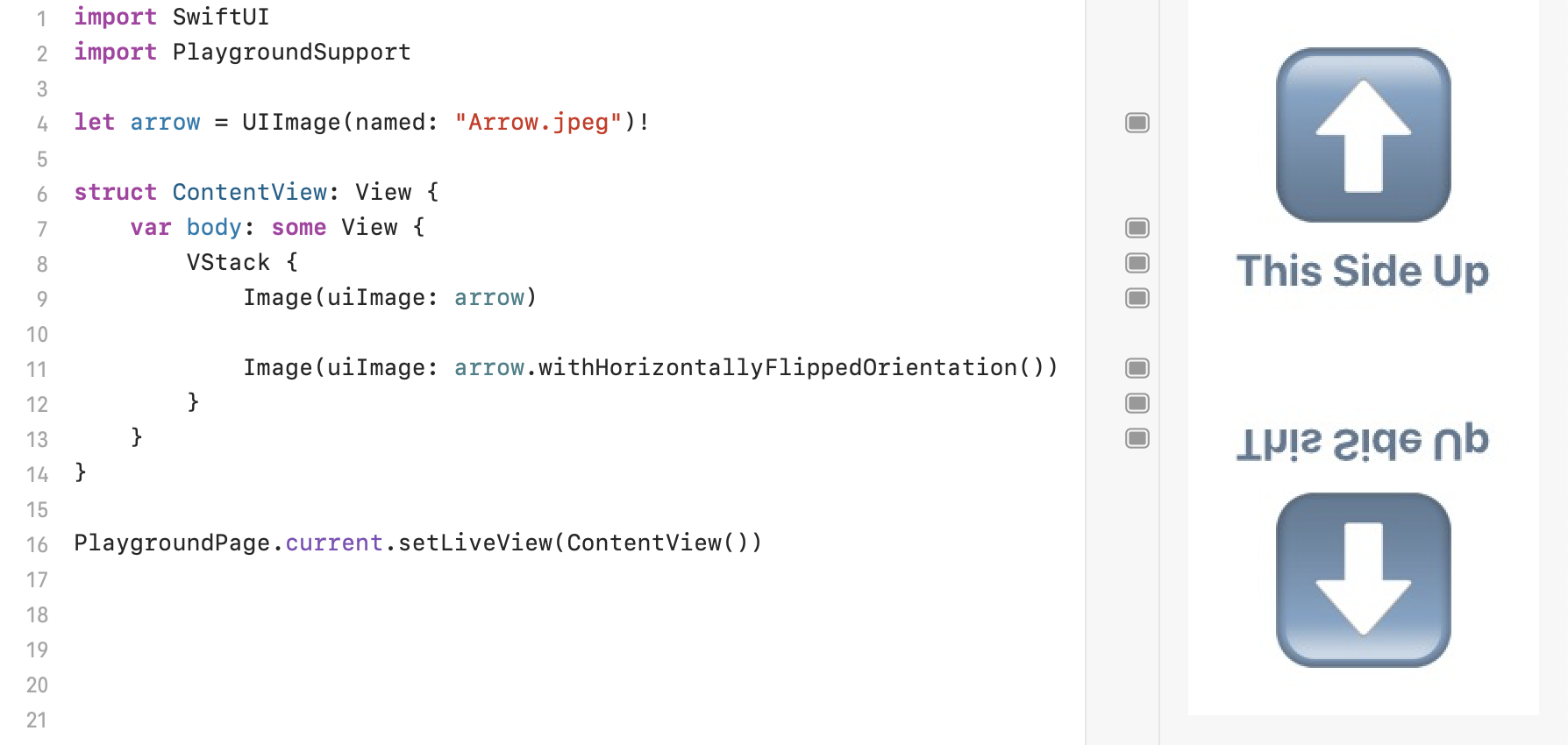

I’ve been working on a little project that involves some photo manipulation. Sometimes, I need to horizontally flip an image. I went through the documentation and turns out UIImage has a handy built-in method to do just that: withHorizontallyFlippedOrientation().

I put it into use and it seemed to be working alright. I tested it with some images from my library and it all seemed to work just fine. Except then I got to some images and it didn’t flip them horizontally, but vertically instead. This was really strange. But thankfully, it also always incorrectly rotated the same images, and in the same way, so I had a whole bunch of reproducible test cases to dig into.

Here’s what the documentation for the method says:

The returned image's

imageOrientationproperty contains the mirrored version of the original image's orientation. For example, if the original orientation isUIImage.Orientation.left, the new orientation isUIImage.Orientation.leftMirrored.

That all seems right, nothing seems to be amiss at a glance. What exactly is the imageOrientation property though?

Decoding Images

Most image formats contain a package of Exif metadata alongside the image data. Exif data can contain information such as the make of the camera, the capture settings, the time, location, and so on, and also an orientation. These orientations specify the direction an image is taken in, and whether it has been mirrored.

The orientation property exists to make it possible to rotate or mirror an image without having to decode and then re-encode all of its data with respect to the new orientation, which can potentially be a lossy operation too

You can read the orientation of a UIImage through the imageOrientation property. It has eight potential values: up, down, left, right, and the mirrored versions of all of them. It’s important here to take a second and look at what any specific orientation actually means. Here’s a snippet from Apple’s documentation for UIImage.Orientation (emphasis added):

The UIImage class automatically handles the transform necessary to present an image in the correct display orientation according to its orientation metadata, so an image object's imageOrientation property simply indicates which transform was applied.

An imageOrientation of left, then, means that the image data has been rotated leftwards i.e. anticlockwise by 90 degrees to display it.

Back to UIImage.withHorizontallyFlippedOrientation() then. I started looking at these orientation values as I rotated images this time, and a pattern cropped up. All of the images that flipped correctly had an orientation with the rawValue of 0 (meaning up) to begin with, and ended up with 4 (upMirrored). Meanwhile the ones that ended up flipped vertically started off with 3 (right) and ended up with an orientation of 7 (rightMirrored).

This lines up with the documentation for the method quoted earlier, the method seems to work as described. So what’s the issue here? Turns out, mirroring doesn’t quite do what you’d think it does.

Mirrored Orientations

First, let’s take a look at the documentation for the right orientation:

If an image is encoded with this orientation, then displayed by software unaware of orientation metadata, the image appears to be rotated 90° counter-clockwise. (That is, to present the image in its intended orientation, you must rotate it 90° clockwise.)

An image with a right orientation has been rotated rightwards by 90 degrees to display it, which lines up with the documentation we’ve just read.

And then let’s take a look at the documentation for the rightMirrored orientation:

If an image is encoded with this orientation, then displayed by software unaware of orientation metadata, the image appears to be horizontally mirrored, then rotated 90° clockwise. (That is, to present the image in its intended orientation, you can rotate 90° counter-clockwise, then flip horizontally.)

Did you catch that? A rightMirrored image is rotated left, and not right, by 90 degrees and then mirrored horizontally.

Working this out a bit, this transformation amounts to a vertical mirroring. You can see it in action below. This image with an original rightward orientation is flipped vertically instead of horizontally.

If you rejigger the operations a bit to perform the mirroring first though, it works out to the same as mirroring horizontally first and then rotating rightwards. I suppose that might justify calling it the rightMirrored orientation, considering both of those operations do take place, though you’d be forgiven for expecting that what should happen is rotating rightwards to obtain the original image, followed by the horizontal mirroring.

It’s clear, then, that the rightMirrored orientation is not the horizontally flipped dual for right. So what is? leftMirrored, as it happens. From Apple’s documentation:

If an image is encoded with this orientation, then displayed by software unaware of orientation metadata, the image appears to be horizontally mirrored, then rotated 90° counter-clockwise. (That is, to present the image in its intended orientation, you can rotate it 90° clockwise, then flip horizontally.)

Correctly Flipping Images

Armed with this knowledge, we can write our own function to horizontally rotate images. Here’s what that looks like:

extension UIImage.Orientation {

var horizontallyFlipped: UIImage.Orientation {

switch self {

case .up: return .upMirrored

case .upMirrored: return .up

case .down: return .downMirrored

case .downMirrored: return .down

case .left: return .rightMirrored

case .rightMirrored: return .left

case .right: return .leftMirrored

case .leftMirrored: return .right

@unknown default: return self

}

}

}

extension UIImage {

func flippedHorizontally() -> UIImage {

if let cgImage = self.cgImage {

return UIImage(cgImage: cgImage, scale: scale, orientation: imageOrientation.horizontallyFlipped)

}

let format = UIGraphicsImageRendererFormat()

format.scale = scale

return UIGraphicsImageRenderer(size: size, format: format).image { context in

context.cgContext.concatenate(CGAffineTransform(scaleX: -1, y: 1))

self.draw(at: CGPoint(x: -size.width, y: 0))

}

}

}We now know the correct duals of each of the orientations, and so can correctly flip them. up and down are rotated by 0 and 180 degrees, respectively, so the order of operations doesn’t matter for those and the method works as expected.

If we can’t retrieve a CGImage because the UIImage isn’t backed by one, we can spin up a UIGraphicsImageRenderer. The graphics renderer itself handles the orientation correctly and draws images in the correct way by default, and so we can flip its context horizontally to get the results we want1.

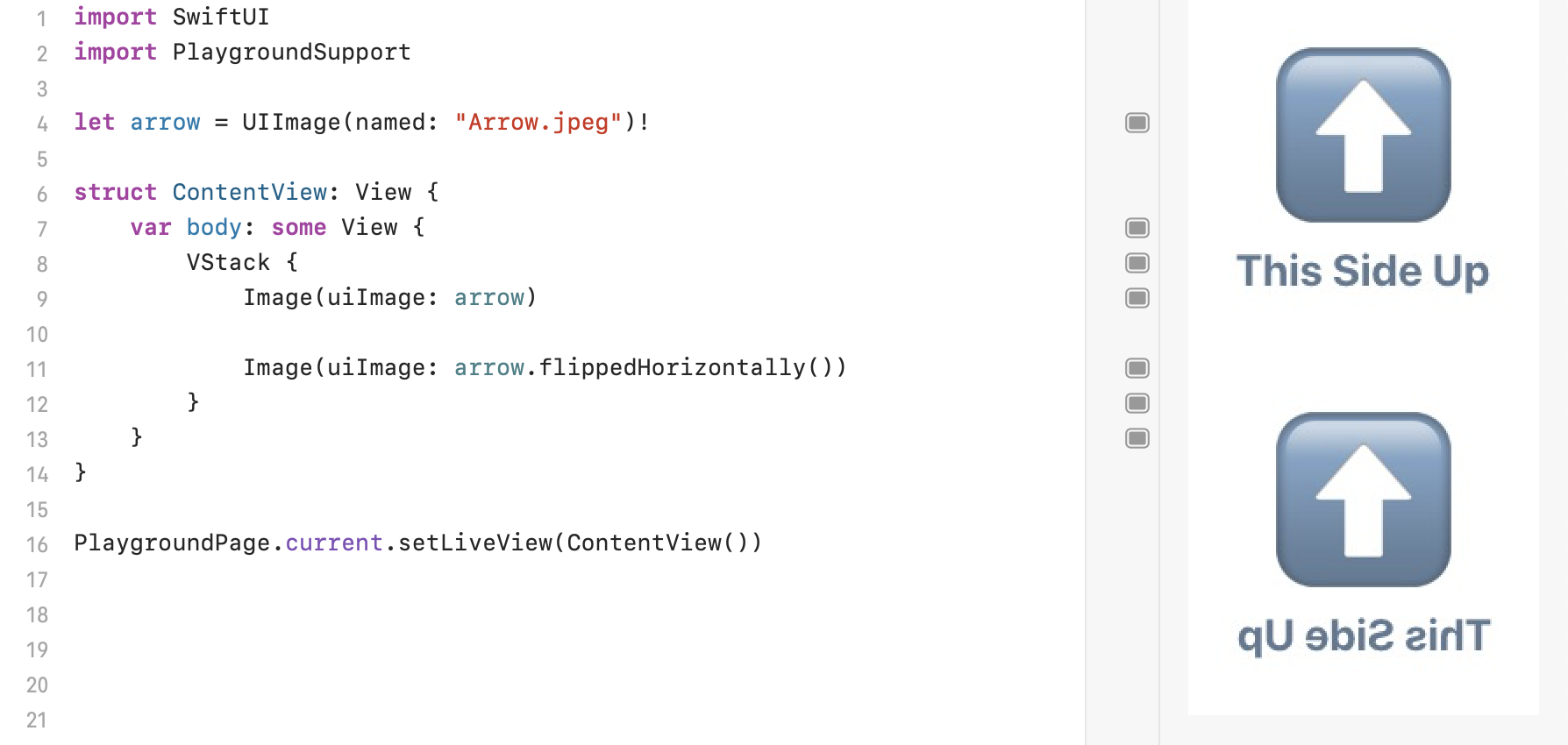

Put this all together, and we now have horizontal image flipping, now working correctly for all orientations:

I’ve now figured out why the UIImage flipping method was bugging out and have a working method of my own, but I remain curious as to why the naming was set up that way.

Based on my little research it seems that the Exif format doesn’t really have any fixed names for each of the 8 orientations, nor do there seem to be any unofficial or de facto standards, so I’m not quite sure why these particular ones were picked.

I initially thought the discrepancy might have to do with Core Graphics’s inverted coordinate system with respect to UIKit, and some misunderstanding of the relation between UIImage.Orientation and CGImagePropertyOrientation on my part. But Apple’s documentation for the latter says that the cases are supposed to line up, so I’m not quite sure what’s going on. Am I missing something clear as day to people with more experience in graphics/photography? If so, please do let me know!

While I remain unsure about the origins of the naming for the orientation cases, I do think that the current implementation of the UIImage method is a bug. I’ve filed FB9877366 about this.

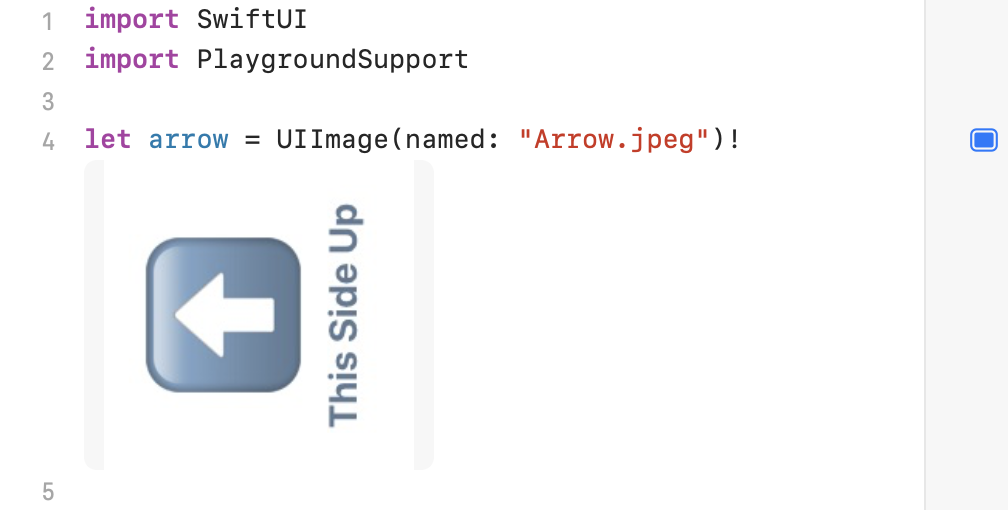

Also, my original intention was to demonstrate this bug using Xcode Playgrounds’s inline previews, but turns out those ignore the orientation entirely. I’ve filed FB9877374 about that.

- I’m not entirely sure if this preserves the colour space and of the original image though, so beware of that when using this approach.↩